Introduction

A knowledge base AI chatbot is a customer support chatbot that retrieves answers from a company’s help center, FAQs, product documentation, and support policies so it can respond accurately, reduce repetitive tickets, and escalate complex issues when needed.

Customer support teams do not struggle because every conversation is complex. They struggle because too many conversations are repetitive. The same questions appear every day across live chat, email, help centers, and in-app support: billing clarifications, onboarding steps, feature how-tos, account permissions, and troubleshooting basics. As SaaS companies grow, that repetitive layer of support consumes more agent time, slows response speed, and makes consistency harder to maintain.

This is why more SaaS teams are not just adding generic AI chatbots. They are building knowledge base AI chatbots that answer from trusted company content. Instead of generating responses from broad internet knowledge or vague prompts, these systems retrieve information from documentation, FAQs, support articles, saved replies, and approved internal guidance. That makes the chatbot more useful, safer, and easier to operationalize inside a real support workflow.

If you are evaluating the broader workflow side of support automation, this topic sits inside the larger shift toward AI customer support automation. But this article focuses on one specific layer of that strategy: how SaaS teams build a grounded, privacy-conscious knowledge-base chatbot that can answer from trusted docs, guide self-service, and hand off cleanly when a human agent should take over.

What Is a Knowledge Base AI Chatbot?

A knowledge base AI chatbot is a support chatbot that uses a company’s own content as the source of truth for customer answers. In practice, that means it pulls from help-center articles, onboarding guides, billing policies, product documentation, troubleshooting steps, and FAQ content instead of relying only on generic model knowledge.

Modern versions of these systems often combine large language models, natural language processing, semantic search, embeddings, and retrieval-augmented generation.

In simple terms, the chatbot interprets the customer’s question, retrieves the most relevant content from trusted sources, and turns that information into a clear answer. The best systems also know when not to answer.

In a stronger support setup, this is not just a chatbot feature. It becomes an operational layer. With Aily, Inquirly’s private AI knowledge agent, teams can answer from approved support content while keeping tighter control over which documents are used, how answers are grounded, and when the system should escalate instead of improvising. That matters even more when support content includes sensitive troubleshooting steps, billing policies, or internal guidance that teams do not want exposed to third-party AI training.

That distinction matters. A generic AI chatbot may sound fluent, but fluent is not the same as accurate. A knowledge base AI chatbot is designed for operational reliability. Its job is not to improvise. Its job is to answer from approved knowledge, stay consistent, and support the rest of the customer support workflow.

Why Generic AI Chatbots Fail in Customer Support

Generic AI chatbots often fail in customer support for one simple reason: they do not know your company well enough. They may understand language, but they do not automatically understand your pricing model, refund rules, integration limits, permissions logic, onboarding flow, escalation path, or product-specific troubleshooting steps.

That creates four common problems.

First, they give answers that sound reasonable but are not grounded in current documentation. In support, an answer that sounds correct but is slightly wrong can create more tickets than it resolves.

Second, they struggle with policy-driven questions. Billing terms, account security, refunds, cancellations, and service limitations usually require exact responses, not approximate ones.

Third, they create inconsistent experiences across channels. One customer gets a different answer in chat than another gets in email because the system is not anchored to a single source of truth.

Fourth, they often lack clean handoff logic. When the issue becomes technical, urgent, or account-specific, a weak chatbot keeps the customer stuck in a loop instead of escalating with context.

For SaaS teams, that is why “training” a chatbot is rarely about model fine-tuning in the strict machine-learning sense. In most support environments, training means preparing trusted content, connecting the right documents, defining retrieval scope, setting rules, testing responses, and monitoring where the system fails.

Why SaaS Teams Train Chatbots on Knowledge Base Content

SaaS companies benefit more from knowledge-based support automation than many other businesses because their support patterns are highly structured. Most products have recurring ticket categories tied to activation, usage, permissions, billing, integrations, and troubleshooting. Those topics already live inside support documentation in some form, which makes them suitable for a retrieval-based chatbot.

A strong knowledge base AI chatbot helps SaaS teams in several ways. Common questions can be answered immediately, which improves first-response speed. Approved documentation also makes support more consistent. Self-service becomes more useful because customers can get precise guidance without waiting in a queue. Agent efficiency improves too, since repetitive tickets are deflected or partially resolved before a human joins the conversation.

It also supports scale more cleanly than a generic chatbot strategy. SaaS teams usually need a system that can answer across multiple product areas, respect account context, tag conversations by intent, and escalate when a request becomes account-sensitive or technically complex. That is much easier when the chatbot is connected to a structured knowledge layer instead of operating as a loose conversational tool.

In practical terms, training a chatbot on your knowledge base turns support documentation into an operational asset. The help center stops being a static library and becomes an active part of the support experience.

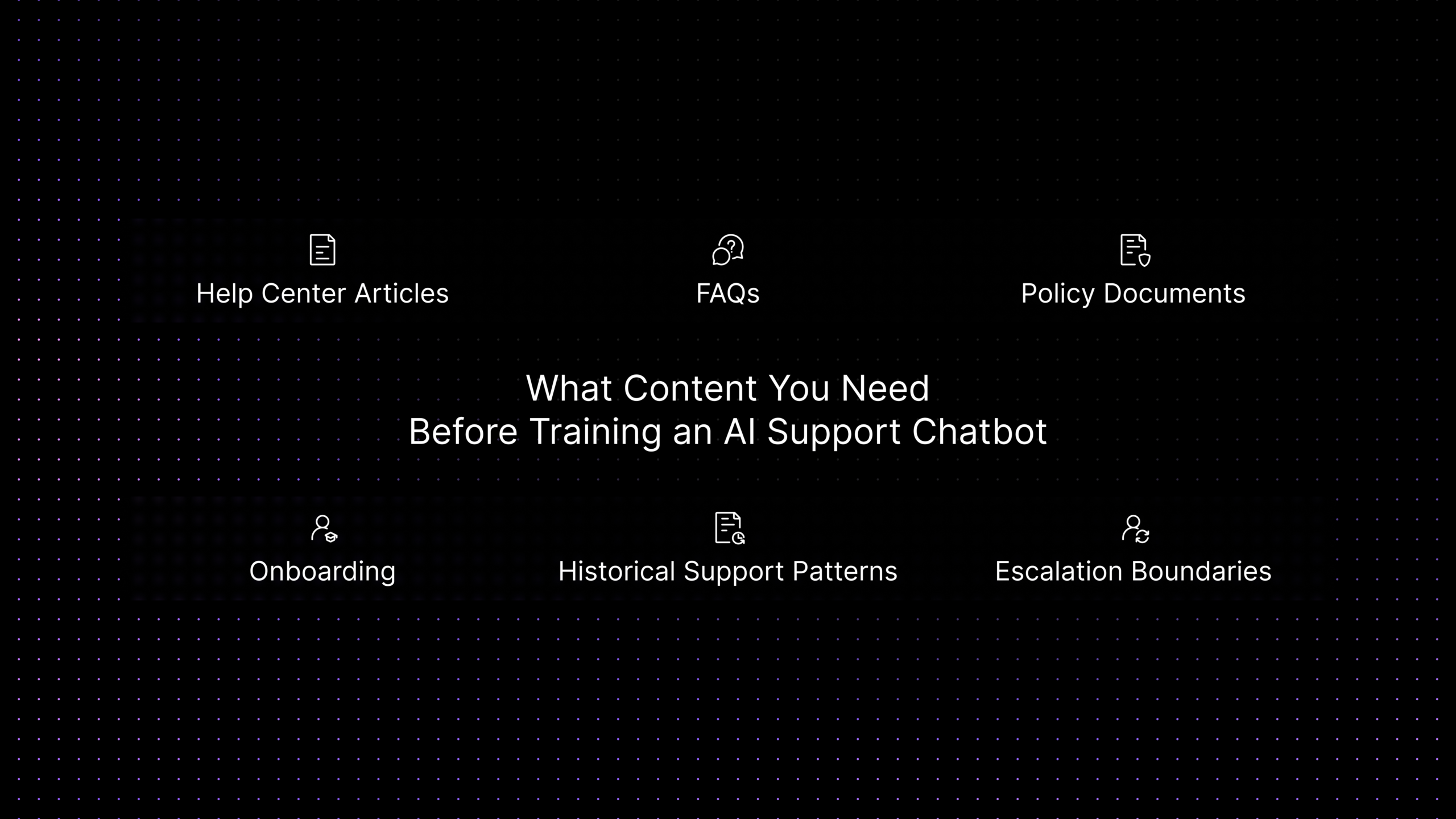

What Content You Need Before Training an AI Support Chatbot

Before you connect any chatbot to your support environment, you need to prepare the content it will rely on. This is one of the most important parts of the rollout because AI does not fix weak documentation. It only exposes weak documentation faster.

At minimum, most SaaS teams should prepare these content types:

-

Help-center articles for common product questions

These usually cover setup steps, permissions, integrations, feature usage, and common troubleshooting flows.

-

FAQ content for recurring support questions

This is especially useful for short, direct questions about billing, account access, plan limits, login issues, and navigation.

-

Policy documents

Refund terms, cancellation rules, SLA terms, data policies, and security guidance should be clear, current, and easy to retrieve.

-

Onboarding and implementation guides

These help the chatbot support trial users, new customers, and admins configuring the product for the first time.

-

Historical support patterns

Resolved tickets, macros, and saved replies can reveal which questions appear most often and where customers still get confused.

-

Escalation boundaries

Not everything should be answered by AI. Document which issues require human review, such as security incidents, billing disputes, bug investigations, or account-specific contract questions.

This preparation stage is also where teams should clean up duplicated articles, remove outdated instructions, standardize product naming, and separate public knowledge from internal-only support guidance.

How to Train an AI Chatbot on Your Knowledge Base

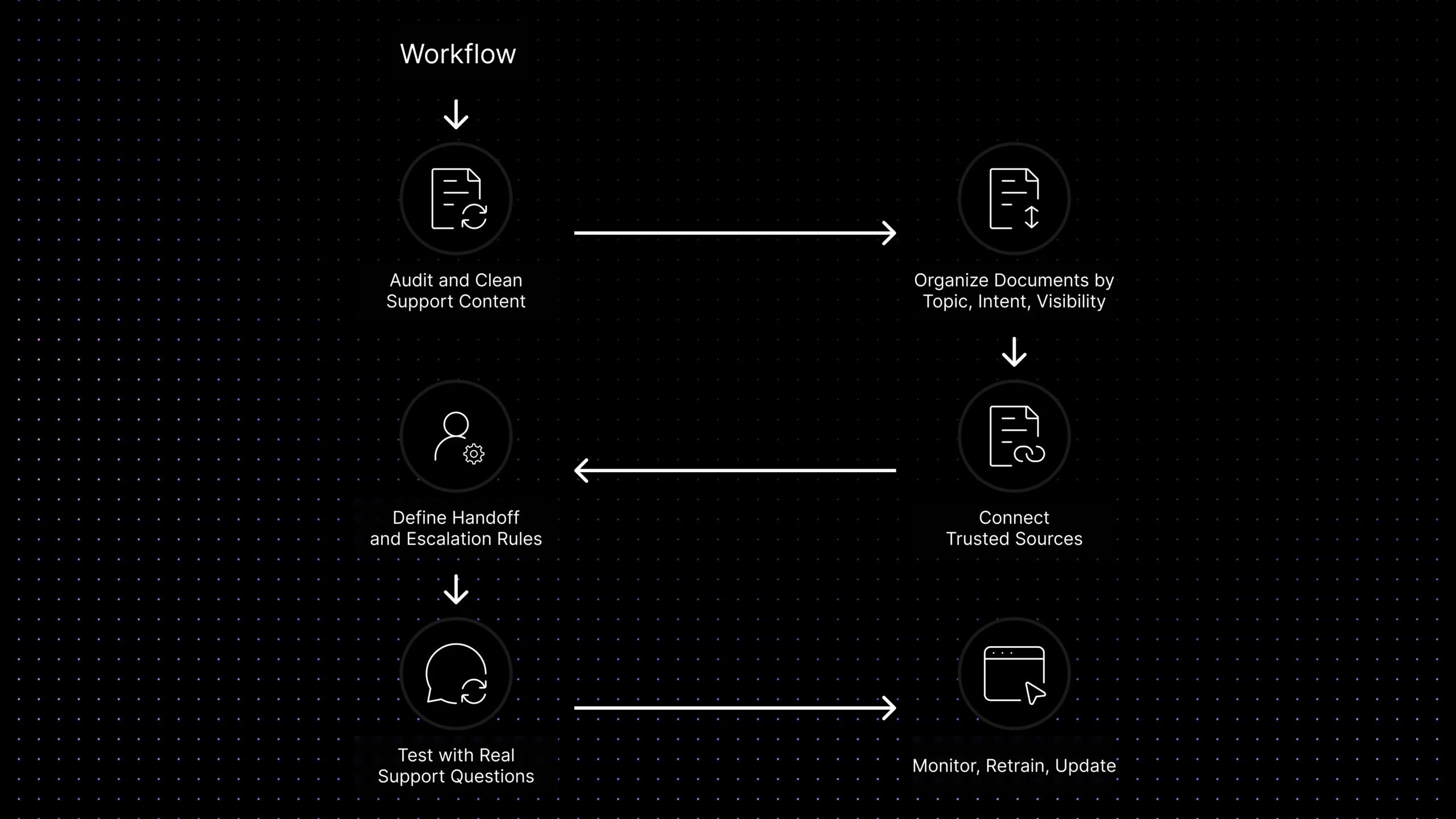

Training an AI chatbot on your knowledge base usually means building a controlled retrieval workflow rather than teaching the model from scratch. The goal is to make sure the chatbot pulls from the right documents, answers in the right way, and escalates at the right time.

Step 1: Audit and clean your support content

Review your highest-volume ticket categories and map them to existing content. Remove outdated articles, merge duplicates, fix broken steps, and update policy language. If your documentation is unclear, the chatbot will inherit that problem.

Step 2: Organize documents by topic, intent, and visibility

A support chatbot works better when billing content, onboarding docs, troubleshooting articles, role-permission guidance, and feature education are clearly separated into controlled groups. That makes it easier to decide what the assistant should retrieve for each type of question instead of searching everything equally.

Step 3: Connect the chatbot to trusted sources

This is where many teams think they are “training” the bot. In reality, they are deciding what the bot can retrieve from and what it should never use. A good setup may include help-center articles, FAQs, troubleshooting guides, public documents, selected internal support notes, and approved macros. The objective is not to give the chatbot more content. It is to give it the right content, with the right visibility and the right controls. For many SaaS teams, that also means ensuring the platform does not use connected documents to train third-party AI models outside the support workflow.

Step 4: Define handoff and escalation rules

Set clear rules for when automation should stop. Account-specific billing disputes, bug reports requiring reproduction, sensitive security questions, angry customers, and VIP requests should not stay in an automated loop. The system should gather context and then escalate.

Step 5: Test with real support questions

Do not test the bot with idealized prompts only. Use the exact wording customers use in real tickets. Ask vague questions, incomplete questions, and multi-part questions. See where the chatbot selects the wrong article, over-explains, or fails to ask a clarifying question.

Step 6: Monitor, retrain, and update

Support documentation changes constantly. New features ship. Pricing changes. Workflows evolve. Training a knowledge base chatbot is an ongoing content operation. Review failed conversations, content gaps, and escalation patterns regularly so the bot improves with the product.

The most accurate support chatbots are not the ones with the largest model claims. They are the ones with the cleanest knowledge base, the clearest boundaries, and the most disciplined review process.

Knowledge Base AI Chatbot vs Generic AI Chatbot

This comparison helps buyers separate grounded support automation from a general-purpose conversational bot.

| Area | Knowledge Base AI Chatbot | Generic AI Chatbot |

|---|---|---|

| Source of truth | Answers from approved docs, FAQs, and support content | Answers may rely on broad model knowledge or loose prompting |

| Accuracy in support | Higher when documentation is current and well organized | More likely to sound correct without reflecting company policy |

| Best use case | Repetitive support questions, onboarding, billing guidance, and article deflection | General conversation, brainstorming, or non-operational Q&A |

| Handoff design | Usually includes escalation rules and workflow context | Often weak unless custom logic is added |

| Maintenance model | Requires document updates, testing, and source control | Requires prompt tuning but may still drift without grounding |

| Buyer fit | Better for SaaS support teams that need consistency and accountability | Better for lightweight conversational use cases, not core support workflows |

Best Practices to Improve Accuracy and Reduce Hallucinations

The simplest way to reduce hallucinations is to reduce ambiguity. The chatbot should answer from defined sources, not from whatever it can infer conversationally.

Keep your source set narrow and trusted. It is better to use a smaller group of approved articles than a large content pile full of outdated material.

Write support content for retrieval, not only for browsing. Long articles with vague headings are harder for a chatbot to use well. Clear section titles, direct steps, and concise answers improve retrieval quality.

Separate public knowledge from internal guidance. Customers do not need every internal diagnostic note, and agents do not need the bot exposing unfinished procedures.

Add escalation rules early. The bot should know when to stop and route to a human rather than forcing a weak answer.

Review failed conversations every week. Look for cases where the system chose the wrong document, answered too broadly, or missed the user’s intent entirely. Those failures usually reveal either a content problem or a rules problem.

Treat FAQ generation as a maintenance workflow, not a one-time project. When new questions appear repeatedly, they should become documented knowledge so the chatbot improves over time.

What to Look for in a Knowledge Base Chatbot Platform

For a buyer, the most important question is not whether a platform offers AI. It is whether the platform can turn your documentation into a reliable support workflow.

Start with knowledge control.

The platform should sync the right documents, separate public and internal knowledge, organize content by groups or labels, and update sources when documentation changes.

Then look at operational fit.

A strong platform should hand conversations to humans cleanly, support labels and routing logic, preserve ticket context, and work with the inboxes and channels your team already uses.

Accuracy and testing also matter.

Teams should be able to test responses before rollout, review which sources were used, and identify failed intents, content gaps, and repeated escalations.

Privacy and governance matter too.

If the platform connects to support documentation, internal notes, FAQs, or synced documents, buyers should understand exactly how that content is handled. Can the team control which documents are available to the bot? Can it separate internal and public knowledge? Can it limit retrieval by group, label, or assistant scope? And can it ensure that proprietary support content is not used to train third-party AI models?

Finally, consider maintainability.

A support chatbot should not become another disconnected tool that your team avoids updating. The strongest platforms make it easier to manage documents, generate or refine FAQ content, review performance, and improve the system continuously.

At this stage, buyers often realize that brand familiarity matters less than operational fit. The right platform is the one that gives the team reliable document control, grounded answers, clean escalation, and a support workflow that stays manageable as knowledge changes over time.

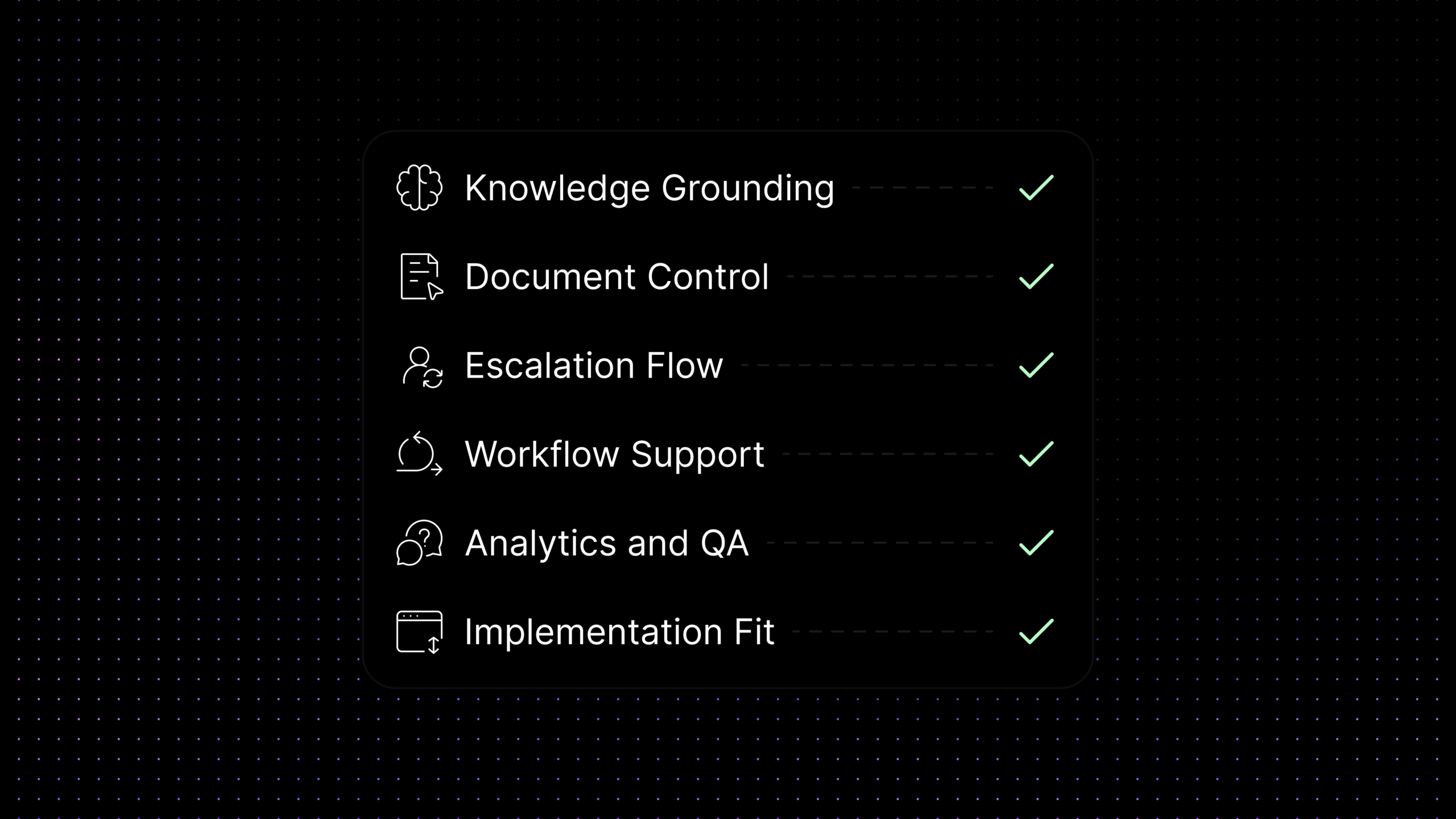

Evaluation Checklist for Buyers

| Evaluation area | Why it matters | What to ask |

|---|---|---|

| Knowledge grounding | Determines whether answers come from trusted company sources | Can the chatbot answer from our help center, FAQs, docs, and approved internal sources? |

| Document control | Improves quality and governance | Can we organize content by groups, visibility, labels, or collections? |

| Escalation flow | Protects customer experience when automation should stop | Can it hand off to human agents with context, intent, and article history included? |

| Workflow support | Makes the bot operational instead of cosmetic | Can it tag, route, summarize, or support team workflows after the conversation starts? |

| Analytics and QA | Shows where the bot is helping and where it is failing | Can we track containment, failed intents, escalation patterns, and content gaps? |

| Implementation fit | Reduces rollout friction | Does it fit our current inboxes, help desk, CRM, and support channels without a heavy rebuild? |

Where Inquirly Fits

For teams evaluating tools, the strongest knowledge-base chatbot platforms do more than answer questions. They help teams control the content, workflow, and guardrails behind those answers.

That is where Inquirly stands out. With support for platform documents, document groups, FAQ generation from documents, assistants tied to document keys, conversation labels, and broader support workflows, Inquirly is built for teams that want grounded support automation rather than a generic chat widget.

This is also where Aily becomes important. Aily can work as a private AI knowledge agent that answers from approved support content, stays aligned with document scope, and hands conversations off with more structure when a human should take over. For SaaS teams, that means the chatbot can become more accurate without becoming harder to govern.

The practical BOFU question is not just “Can this bot answer from our docs?” It is “Can this platform help us organize knowledge, control retrieval, support escalation, and improve support operations without exposing proprietary documentation to third-party AI training?” That is the evaluation path where Inquirly is strongest.

Conclusion

A knowledge base AI chatbot is not just another AI feature for support teams. For SaaS companies, it is a way to turn help content, product documentation, and support workflows into a faster and more scalable customer experience.

The most effective teams do not start by asking which chatbot sounds the smartest. They start by asking which content is trustworthy, which ticket categories are repetitive, which questions should be automated first, and where human agents still need to lead. That is what makes a chatbot operationally useful instead of superficially impressive.

If your team is evaluating how to train an AI chatbot on your docs, FAQs, and support content, focus on grounding, handoff design, testing, and workflow fit. And if you want a platform that helps you organize documents, generate FAQ content, personalize support experiences, and manage conversations with more structure, Inquirly is well positioned to support that evaluation path.