A practical guide for SaaS support teams that want better SLA health without piling on more tools

A support SLA is the response and resolution commitment a support team uses to manage ticket urgency, ownership, and escalation. Preventing SLA breaches depends on clear ownership, early escalation, and timers tied to the right priority rules.

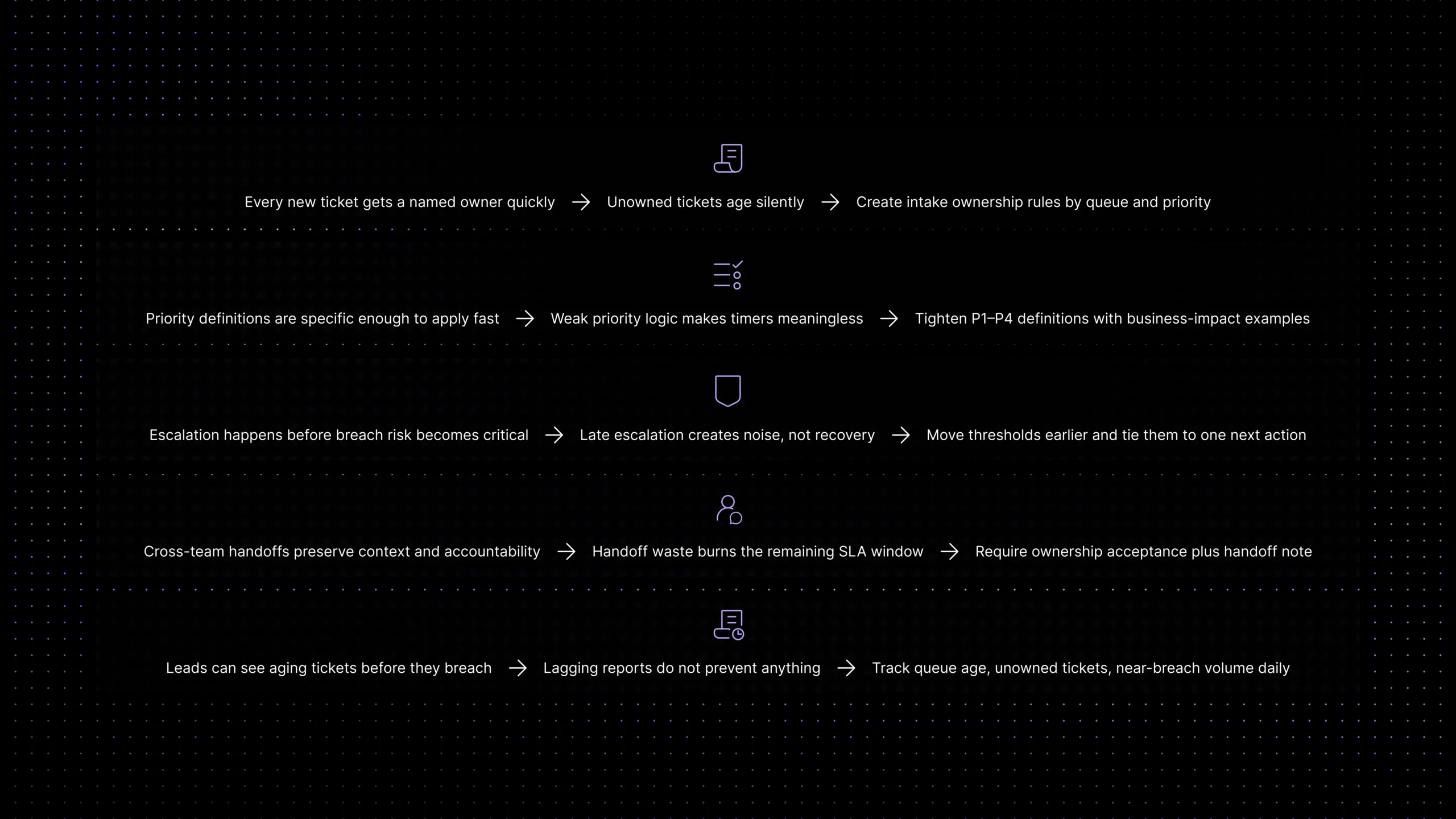

Most support teams do not miss SLAs because they forgot to create a timer. They miss them because the work around the timer is weaker than it looks. A ticket sits in the wrong queue for too long. A handoff happens without a clear owner. Priority gets updated late. An escalation rule exists, but it fires after the real risk has already passed.

In SaaS, that problem compounds quickly. The more queues, products, plans, customers, and channels a team supports, the easier it becomes for a ticket to look “active” while nobody is truly responsible for moving it forward. On paper the workflow exists. In practice, the ticket is drifting toward a breach.

That is why support SLA management is really an ownership problem as much as a timing problem. Teams that keep SLA health strong usually do three things well: they make ownership obvious, they escalate before a ticket becomes dangerous, and they watch leading indicators instead of waiting for a miss to appear on a weekly report.

This guide breaks that down in practical terms. It explains what support SLA management means in day-to-day operations, why breaches happen in growing support teams, and how to use ownership rules plus an escalation matrix to prevent avoidable overdue tickets. The goal is not just to react to missed timers faster. It is to make breach risk visible early enough that the team can still change the outcome.

What support SLA management means in practice

Support SLA management is the operating system around response and resolution promises. It includes how priorities are set, how timers start, who owns the ticket at each stage, when a ticket escalates, and what happens if progress stalls between teams.For a clear industry definition, Atlassian explains how service level agreements (SLAs) define response and resolution expectations, which is why support teams rely on them to manage urgency and accountability.

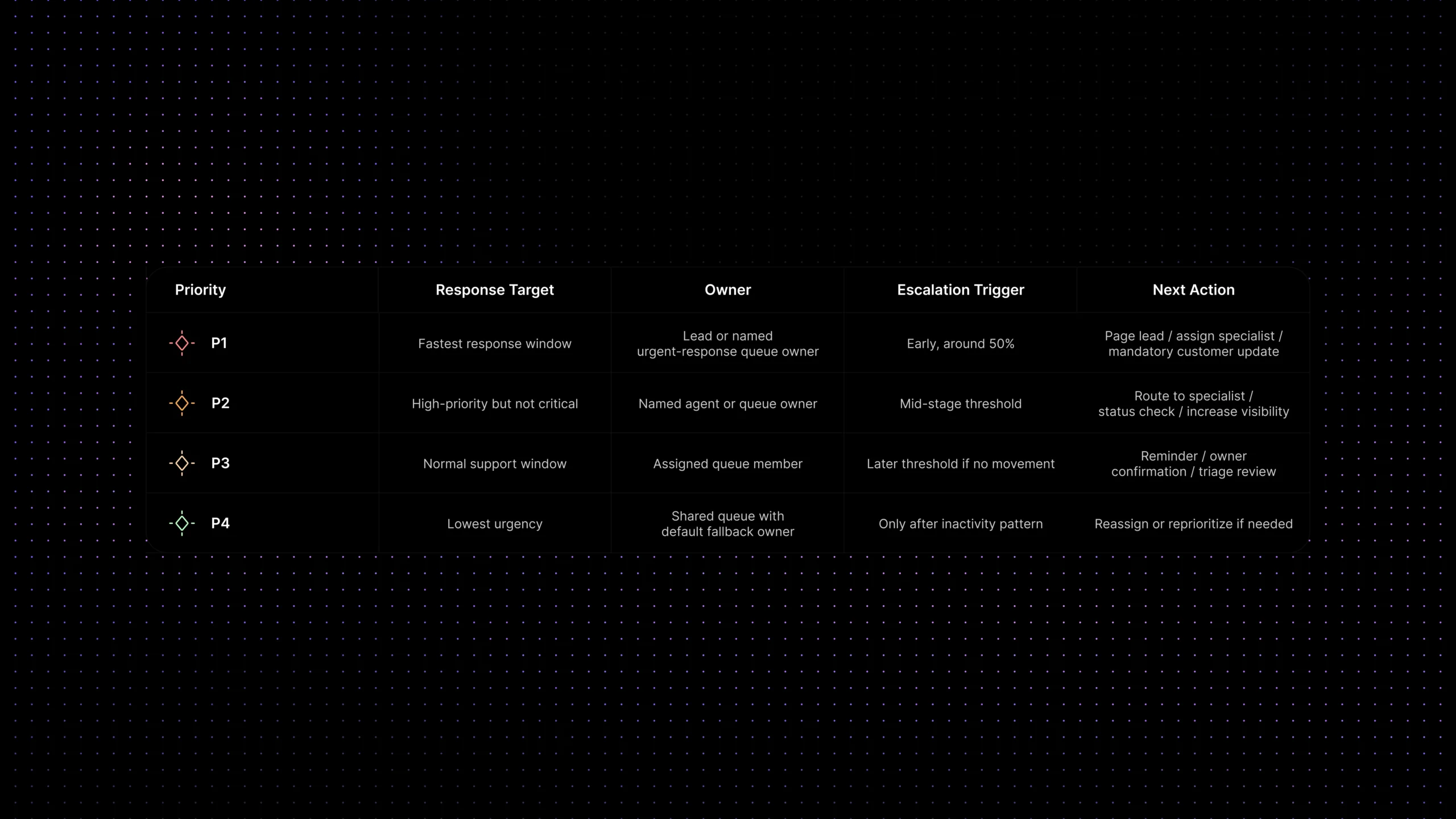

In other words, a support SLA is not just a deadline. It is a set of rules that tell the team what good movement looks like before a breach happens. A healthy setup usually includes a response SLA, a resolution SLA, priority definitions such as P1 through P4, ownership rules, and escalation thresholds tied to risk rather than guesswork.

That is also why the term overlaps with customer support SLA and SLA management without meaning exactly the same thing. SLA management is the broad category. Customer support SLA narrows it to support work. Support SLA is the most operational phrasing because it points directly to the queue, the owner, and the clock running inside the team.

Why SLA breaches happen in growing support teams

The first reason is silent ownership loss.

A ticket may technically belong to a queue, but not to a person who feels accountable for the next step. That gap is where many breaches are born. Teams think they have coverage because the queue is monitored, but the ticket is effectively unowned.

The second reason is late escalation.

Many teams escalate when a ticket is already close to breach. By then the best options are gone. The right specialist is not available, the customer has already waited too long, or the next queue inherits a problem it cannot realistically fix inside the remaining window.

The third reason is weak priority hygiene.

If priority is set too late, or if P1, P2, P3, and P4 definitions are vague, timers stop reflecting actual business urgency. Tickets that should move fast get treated like routine work. Tickets that are merely noisy steal attention from truly time-sensitive issues.

This matters even more when urgency depends on account value, contract risk, billing tier, or strategic customer status. If the workflow cannot surface those signals safely and quickly, the team may treat a high-value ticket like routine work until the remaining SLA window is too small to recover.

The fourth reason is broken handoffs.

Support teams scale by splitting work across roles, products, and escalation paths, but every handoff adds breach risk. If the receiving team does not inherit full context, explicit ownership, and a clear next action, the clock keeps moving while the ticket pauses.

The final reason is that many teams watch lagging indicators only.

They measure breach count after the damage is done instead of watching queue age, reassignment rate, aging unowned tickets, and escalation latency while there is still time to intervene.

The ownership rules that stop silent tickets

If you want to prevent SLA breaches, start with ownership before automation. A good ownership rule answers one question at every stage of the workflow: who is responsible for the next movement on this ticket right now?

That sounds obvious, but many queues still rely on shared attention instead of explicit accountability. Shared attention can work for low-risk tickets. It fails for breach prevention. The more urgent the ticket, the less acceptable it is to let ownership live at the queue level only.

A practical ownership model usually has four layers. First, define who owns a brand-new ticket at intake. Second, define who owns it after triage. Third, define who owns it during a specialist handoff. Fourth, define who owns breach recovery if the ticket gets too close to the timer.

Support leaders often make a simple mistake here: they assign people to tickets but do not assign ownership states. The agent is visible in the system, but nobody knows whether that person is expected to respond, investigate, update the customer, or re-route. Clear states reduce that ambiguity and make escalation rules far more effective.

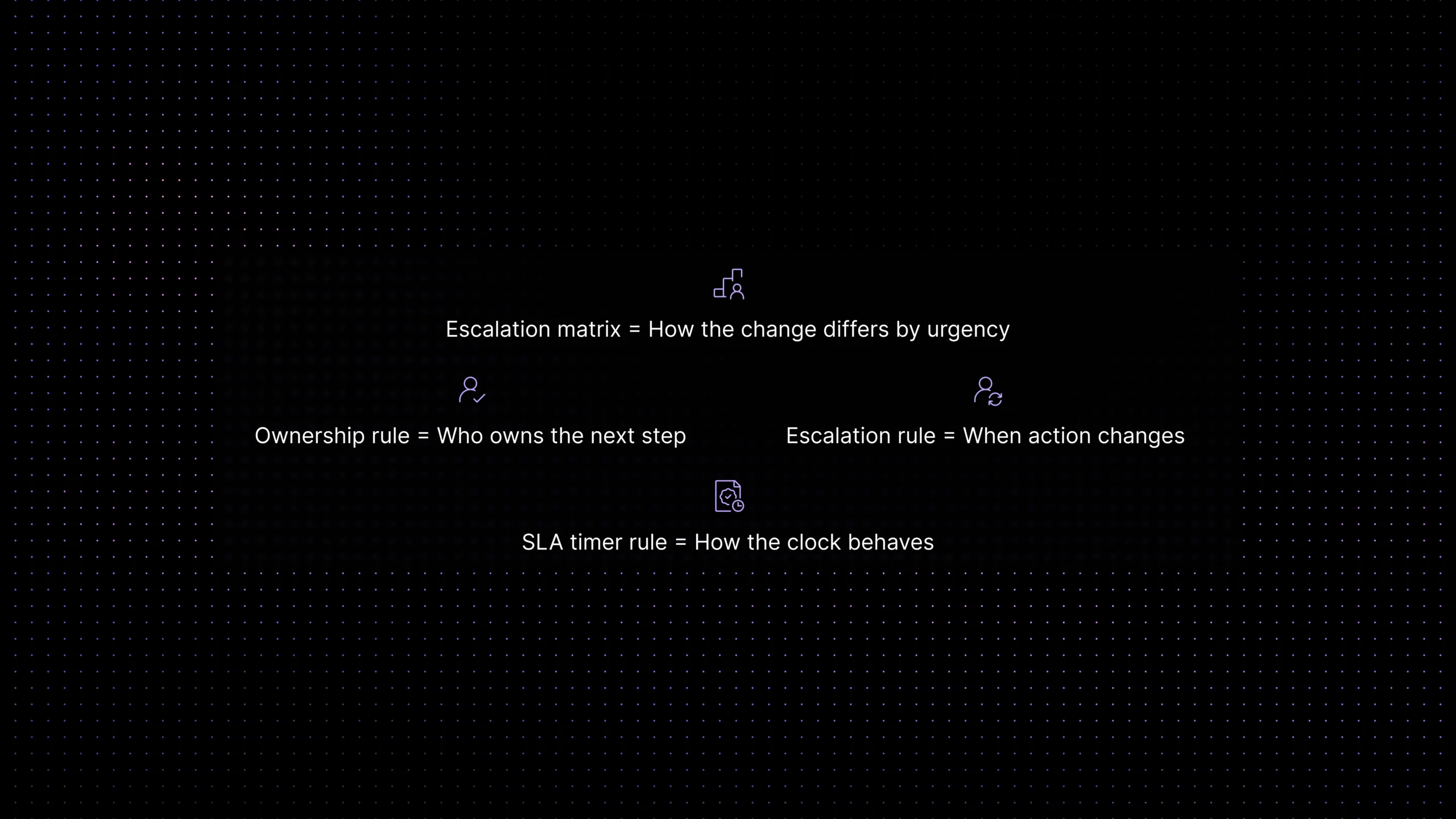

Escalation rules vs escalation matrix

These terms are related, but they are not interchangeable. In practice, teams need all three layers below if they want strong SLA health.

The matrix matters because it forces the team to decide what changes by urgency. A P1 ticket should not follow the same escalation path as a low-risk backlog item. When teams skip that design step, they end up with rule-heavy systems that still miss critical tickets at the worst moment.

How to design a support escalation workflow that prevents breaches

Start by mapping the points where a ticket can stall before a breach becomes visible. For most SaaS teams, that includes intake, triage, specialist handoff, waiting on customer reply, waiting on engineering, and waiting on approval for an exception or workaround.

Then set escalation thresholds before the SLA expires. A useful rule of thumb is to escalate at 50 to 75 percent of the timer depending on priority and queue volatility. High-priority queues need earlier intervention because recovery time is shorter. Lower-priority queues can tolerate later checks as long as ownership remains clear.

Next, define what escalation actually changes. A weak escalation only sends a notification. A stronger escalation changes ownership, raises visibility, or triggers a concrete action such as paging a lead, re-routing the ticket, or requiring a status update to the customer.

Finally, document the matrix in plain language. The people working the queue should not need to interpret policy under pressure. For each priority, spell out the response target, the current owner, the escalation trigger, and the next action.

Breach-prevention checklist

Use this as a quick audit before you blame the team for missed SLAs.

What to monitor before a ticket goes overdue

The best SLA programs monitor risk, not just outcomes. One of the simplest metrics is the number of tickets with no clear owner after a fixed period. Another is near-breach volume by queue, because a queue full of almost-late tickets usually points to design problems long before the weekly breach report does.

Queue age is another early warning. If average queue age is rising while first response time still looks fine, the team may be acknowledging tickets quickly but not resolving blockers fast enough. Reassignment rate is equally useful. When tickets change hands too often, ownership is unstable and SLA risk rises even if the system still shows activity.

Support leaders should also watch escalation latency: how long it takes for a ticket to move from breach risk to active intervention. If the matrix exists but escalation happens slowly, the issue is usually not policy. It is workflow visibility, role clarity, or weak accountability in the queue.

Common SLA management mistakes

A common mistake is building the timer and assuming the process will naturally adapt. It rarely does. Timers measure risk, but they do not create accountability. Another mistake is using one escalation path for every priority. That makes the system look standardized while hiding the fact that critical tickets need faster, different intervention.

Teams also over-index on breach count without asking where the timer was lost. A breach can begin at intake, at routing, during a handoff, or while waiting on internal follow-up. If you only review the final miss, you will keep solving symptoms instead of the stage that actually failed.

One more mistake is expecting SLA rules to solve every support problem. Strong rules help, but they do not fix bad routing, missing customer context, weak self-service entry points, or fragmented communication tools on their own. That is why this topic naturally connects to broader work on support ticket automation, customer self-service for SaaS, unified customer context in support, and customer support workflow audit.

Where Inquirly fits

For SaaS teams, SLA prevention works best when ownership, routing, ticket state, and conversation history all live in one operating layer. That is the standard to evaluate against. The goal is not just to count timers. It is to make the next responsible action obvious while there is still time to protect the customer experience.

That is where Inquirly fits naturally. Teams trying to improve support SLA health usually need more than a timer field. They need workflows that route work correctly, tickets that carry context forward, shared visibility into aging conversations, and knowledge that helps resolve issues before the queue becomes reactive.

Conclusion

The easiest way to think about support SLA management is this: timers tell you when risk exists, but ownership and escalation decide whether that risk turns into a breach. Teams that stay on top of SLA health do not wait for the queue to self-correct. They define who owns the next step, when that ownership should change, and what happens before the deadline becomes unrecoverable.

If overdue tickets are becoming normal, start small. Tighten ownership at intake. Move escalation thresholds earlier. Make the escalation matrix explicit. Then watch whether near-breach tickets become easier to recover. That sequence usually does more for SLA health than adding another dashboard ever will.